Problem

Autonomous robots that cannot perceive their environment accurately cannot make good decisions — regardless of how sophisticated their decision-making algorithms are. The VEX AI Competition's existing robot platform includes GPS, an AI Vision System, and a Sensor Fusion Map, but all three share a critical limitation: they only capture what is directly in the robot's forward field of view. Objects that move out of that cone are frozen at their last known position in the map, which degrades rapidly in a dynamic match environment where game elements and opposing robots move continuously. More critically, the system has no concept of the Z-axis — it cannot determine how high it or another robot has elevated on the field's Elevation Bars, which directly determines end-of-match scoring. An AI system bounded by what it can observe will always make decisions that are, at best, as good as its sensory model — and this platform's sensory model was fundamentally incomplete.

Solution

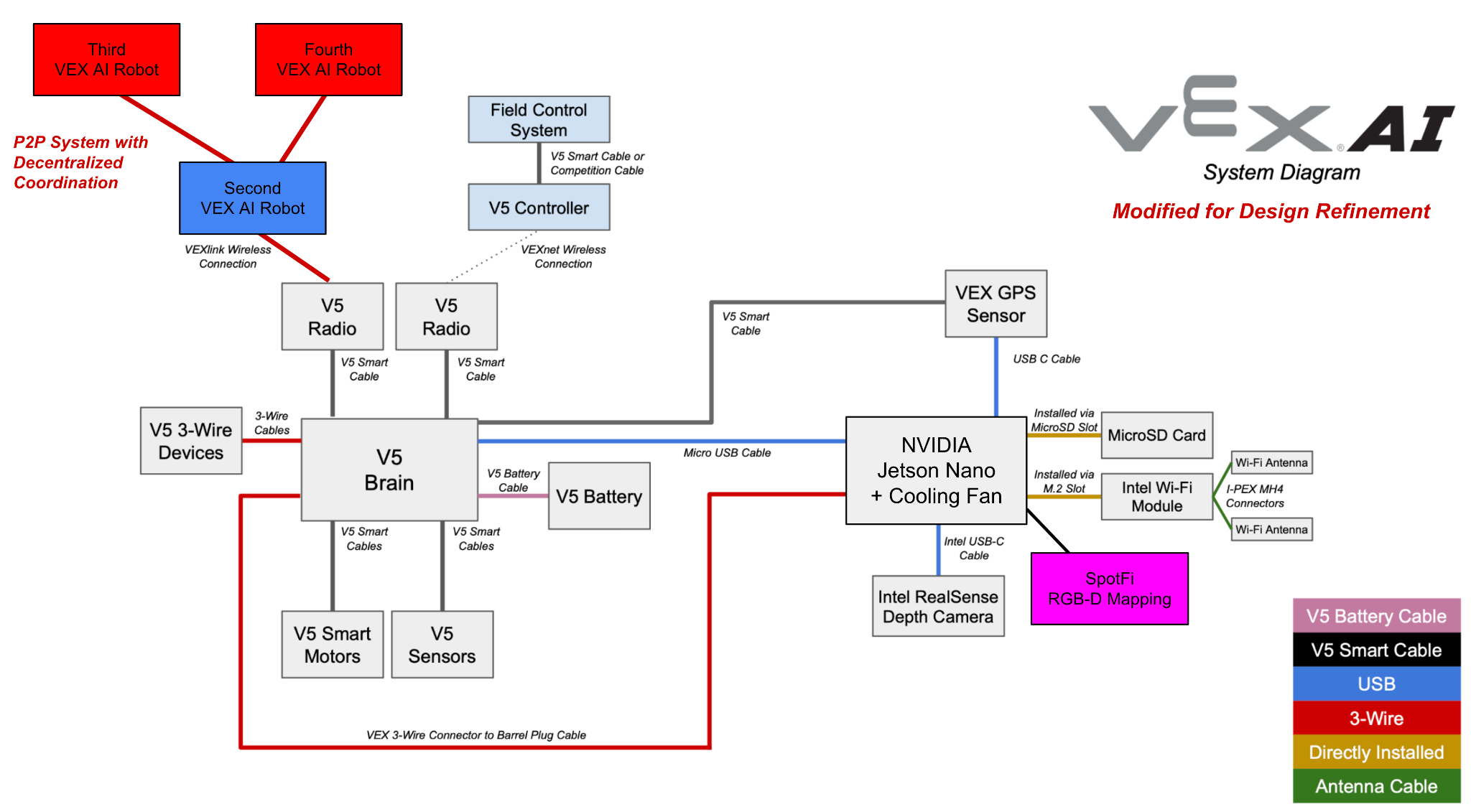

This Georgia Tech CS 6675 research paper proposes a concrete hardware and software architecture that extends the VEX AI robot's spatial awareness beyond its current field-of-view limitations. The baseline design adds SpotFi — a WiFi-based localization algorithm — for precise real-time positioning without GPS drift, and an RGB-D depth camera for 3D environment reconstruction that captures full spatial geometry including elevation. A design refinement phase extended the single-robot architecture to a peer-to-peer decentralized coordination network, enabling multiple robots to share sensor data in real time and maintain a shared world model that no individual robot could construct alone. Evaluation metrics, failure modes, and degradation pathways were analyzed to give the proposed system a realistic engineering assessment rather than a theoretical one. The paper frames the improvements as actionable, testable enhancements grounded in existing technology — not speculative research.

Skills Acquired

- VEX AI — the autonomous robotics competition platform that provided the system under analysis. Understanding the VEX AI stack — its GPS module, AI Vision System, and Sensor Fusion Map — was prerequisite to identifying where its perception model breaks down and what hardware additions would meaningfully address the gaps.

- RGB-D Mapping — the use of RGB-D (color plus depth) cameras to reconstruct 3D spatial geometry in real time. RGB-D data provides the Z-axis awareness the existing vision system lacks, enabling the robot to track object elevation, assess Elevation Bar occupancy, and build a volumetric map of the field rather than a 2D projection.

- SpotFi — a WiFi Channel State Information-based localization algorithm that estimates device position from signal propagation patterns across access points. SpotFi was proposed as the primary localization layer because it operates without line-of-sight requirements and achieves sub-meter accuracy in indoor environments where GPS signals degrade.

- NVIDIA Jetson Nano — the embedded computing platform proposed to handle the onboard computer vision and depth processing workload. The Jetson Nano's GPU-accelerated inference capabilities make it appropriate for real-time RGB-D processing at the edge without requiring a connection to external compute resources.

- P2P Networks — the decentralized coordination architecture proposed in the design refinement phase. A peer-to-peer network between robots allows each robot to share its local sensor observations with teammates, building a shared world model that reflects the full field state rather than each robot's limited individual perspective.

- Sensor Fusion — the integration of data from multiple sensor modalities (GPS, vision, depth, WiFi localization) into a unified spatial representation. Sensor fusion is the technique that makes the proposed architecture work: no single sensor provides complete spatial information, but the combination addresses all identified gaps in the existing system.

What makes this architecture meaningful is not any single component — it is how they address specific, documented failure modes in the existing system.

Deep Dive

The VEX AI Robotics Competition pits fully autonomous robots against each other on a 12×12-foot field. No human drivers — just onboard AI making every decision in real time. The system already includes GPS sensors, an AI Vision System, and a Sensor Fusion Map. So what's the problem?

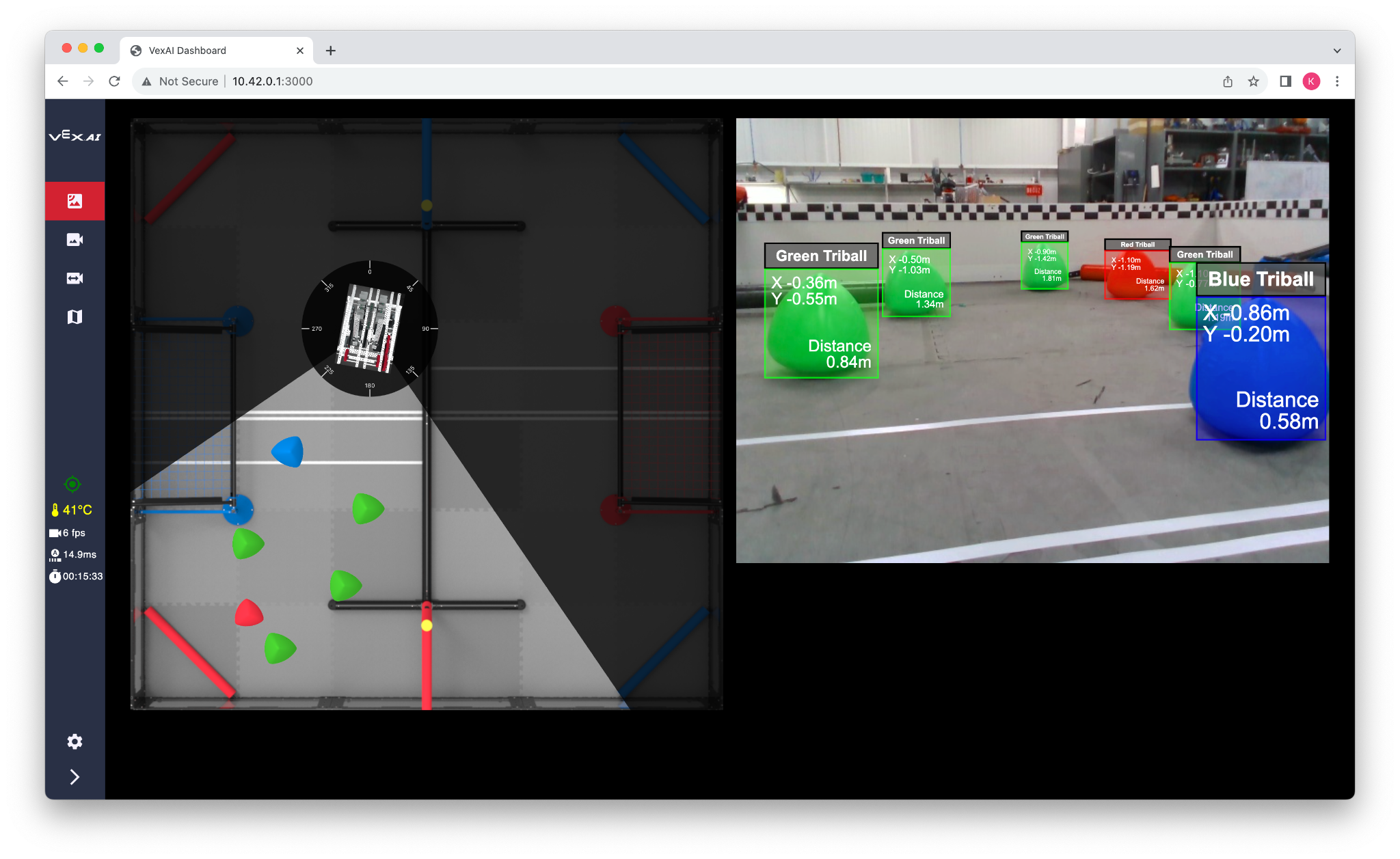

The Sensor Fusion Map only sees what's directly in front of each robot — like a spotlight. Objects that move out of that field of view are recorded at their last known position, not their current one. A robot repositioning itself toward a scoring element might arrive to find nothing there. And critically, the system has no concept of the Z-axis: it cannot determine how high it or another robot has elevated on the field's Elevation Bars, which directly impacts end-of-match scoring.

The Problem Space

The VEX Robotics Competition (VRC) is the world's largest robotics competition according to the Guinness Book of World Records, with over 30,000 students competing. In 2019, VEX introduced an AI variant — the VEX AI Competition — where robots operate fully autonomously using onboard AI. Despite its ambition, the competition gained minimal popularity: the 2024 VEX AI World Championship hosted only 60 teams compared to 820 teams at the standard high school championship.

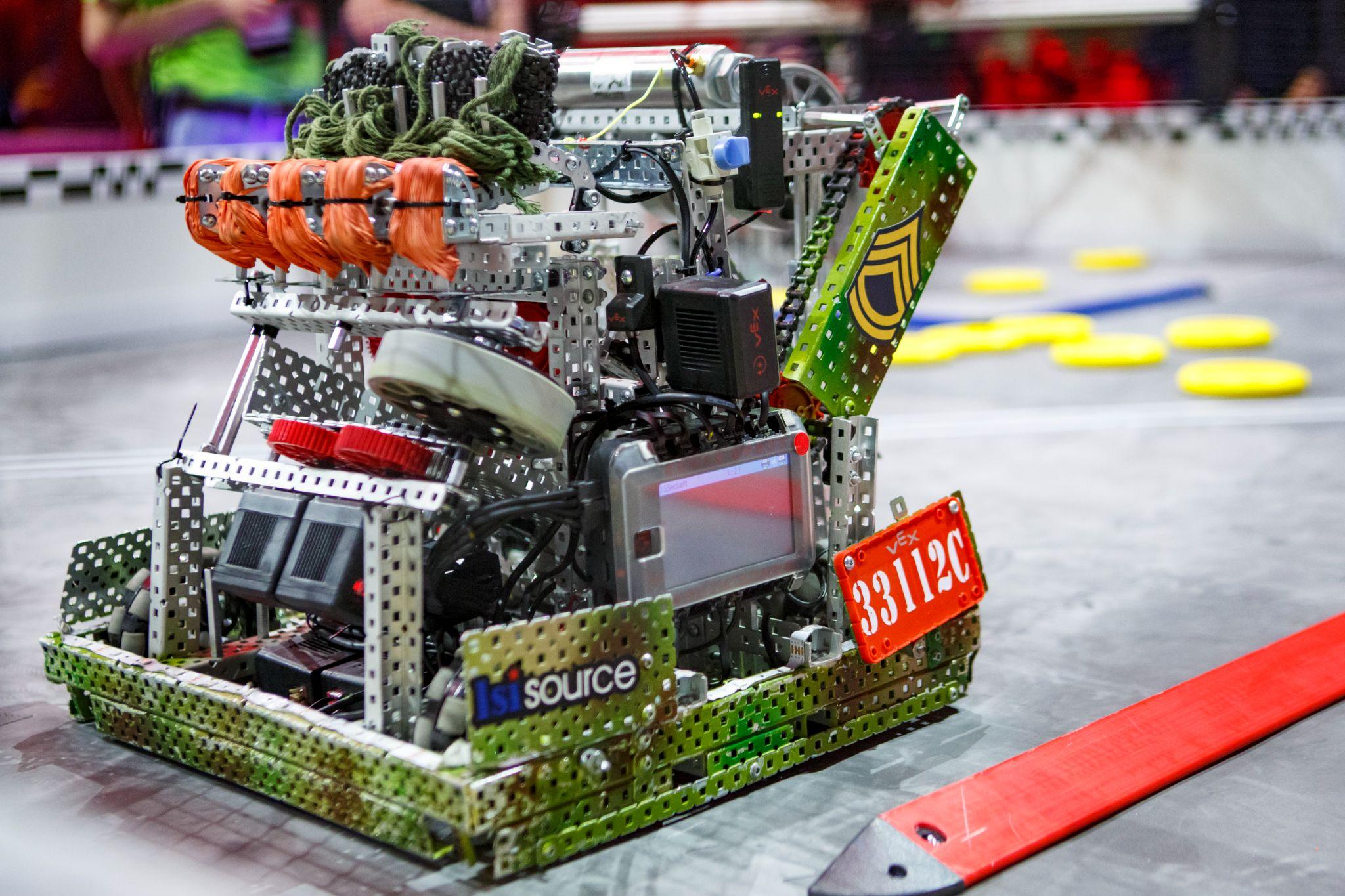

Figure 1. Metallic VEX V5 Robot at the VEX World Championship.

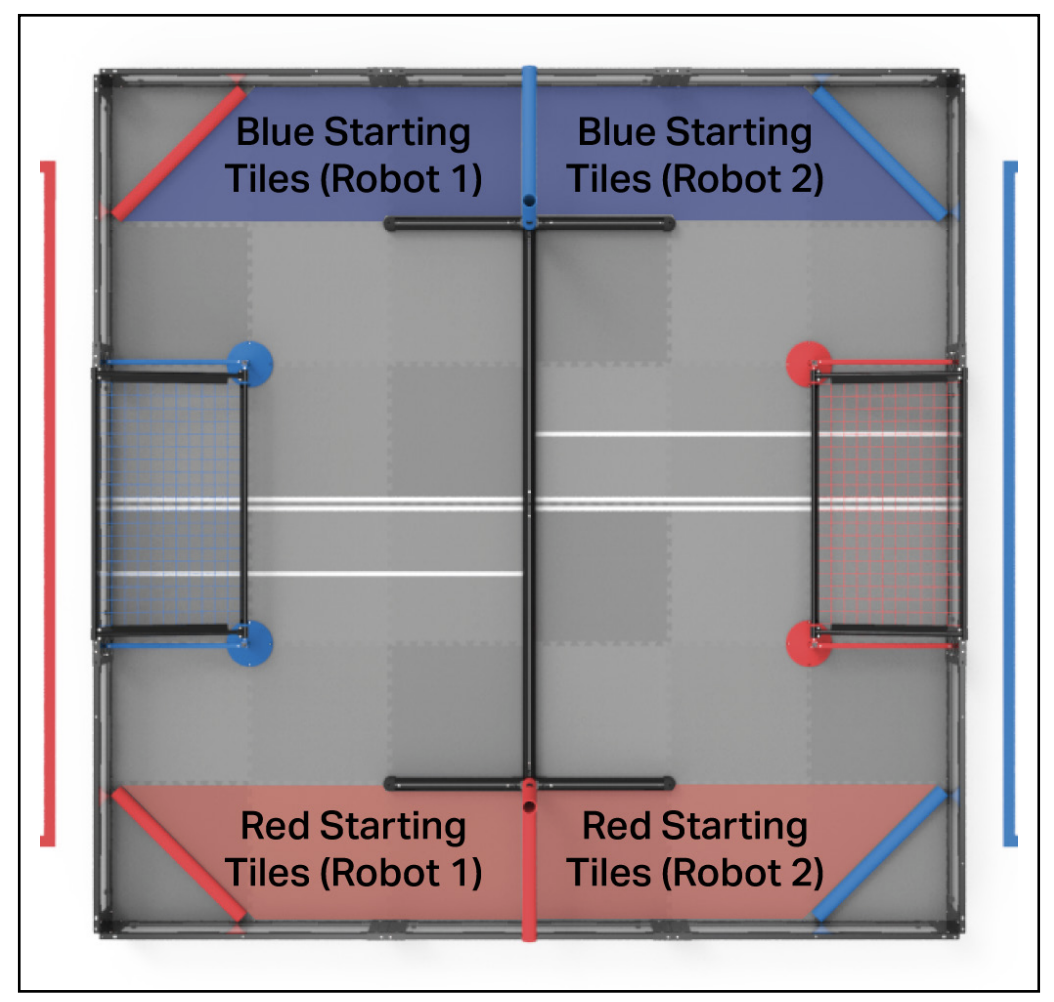

The game format is a 2-vs-2 match played over 2 minutes. Robots score by moving colored "Triballs" into goals and by elevating on their Alliance's Elevation Bars at the end — the higher the elevation, the more points. The existing VEX AI system architecture consists of three components:

- GPS Sensor: Tracks the robot's 2D position (X, Y) on the field

- AI Vision System: Recognizes and classifies field objects

- Sensor Fusion Map: Combines GPS and vision data to build a timestamped map of object positions

The critical limitation: the Sensor Fusion Map's camera-based field of view is narrow and forward-facing. Objects that move after being timestamped are still recorded at their original position. The system also lacks any Z-axis awareness — it cannot measure its own elevation height relative to other robots when competing for Elevation Bar points.

Figure 2. The VEX AI Dashboard — the Sensor Fusion Map shows only what is directly in front of the robot, similar to a spotlight's field of view.

Need Finding: Three Match Scenarios

To scope the problem, I analyzed three scenarios at increasing complexity — all within a standard 2-minute 2-vs-2 match:

Scenario 1

Easy — Match Start

All robots and Triballs begin at fixed starting positions. No movement has occurred, so the Sensor Fusion Map's timestamped observations are completely accurate. The AI system performs at its best here because the world matches its internal model.

Figure 3. Starting areas for robots in a 2 vs. 2 VRC Over Under match — Red and Blue Alliance tiles dictate initial placement.

Scenario 2

Average — Midmatch (1 Minute In)

All robots and Triballs have moved significantly from their starting positions. Objects that moved out of a robot's field of view while it was looking elsewhere are now recorded at stale positions. The AI may navigate toward Triballs that are no longer there — missing scoring opportunities.

Figure 5. Movement of robots and Triballs halfway through the match — objects are far from their recorded starting positions.

Scenario 3

Hard — End of Match (Elevation Phase)

At the end of the match, robots must decide whether to attempt elevation on the Alliance's Elevation Bars. The height of elevation directly determines how many points are awarded. The existing system has no Z-axis sensor — it cannot know its own elevation height or compare it against a competing robot's elevation. This is a complete blind spot in the final, highest-stakes moments of every match.

Figure 6. Final positions of robots and Triballs at the end of the match — maximum complexity for the AI system.

Figure 7. A robot scored by elevating on the Alliance's Elevation Bar.

Figure 8. Elevation tier scoring — the higher the elevation, the more points awarded.

Baseline Design: SpotFi + RGB-D 3D Mapping

The root cause of all three scenarios is the same: the robot cannot see what it cannot see. My baseline design proposes adding two complementary technologies to the existing VEX AI system architecture:

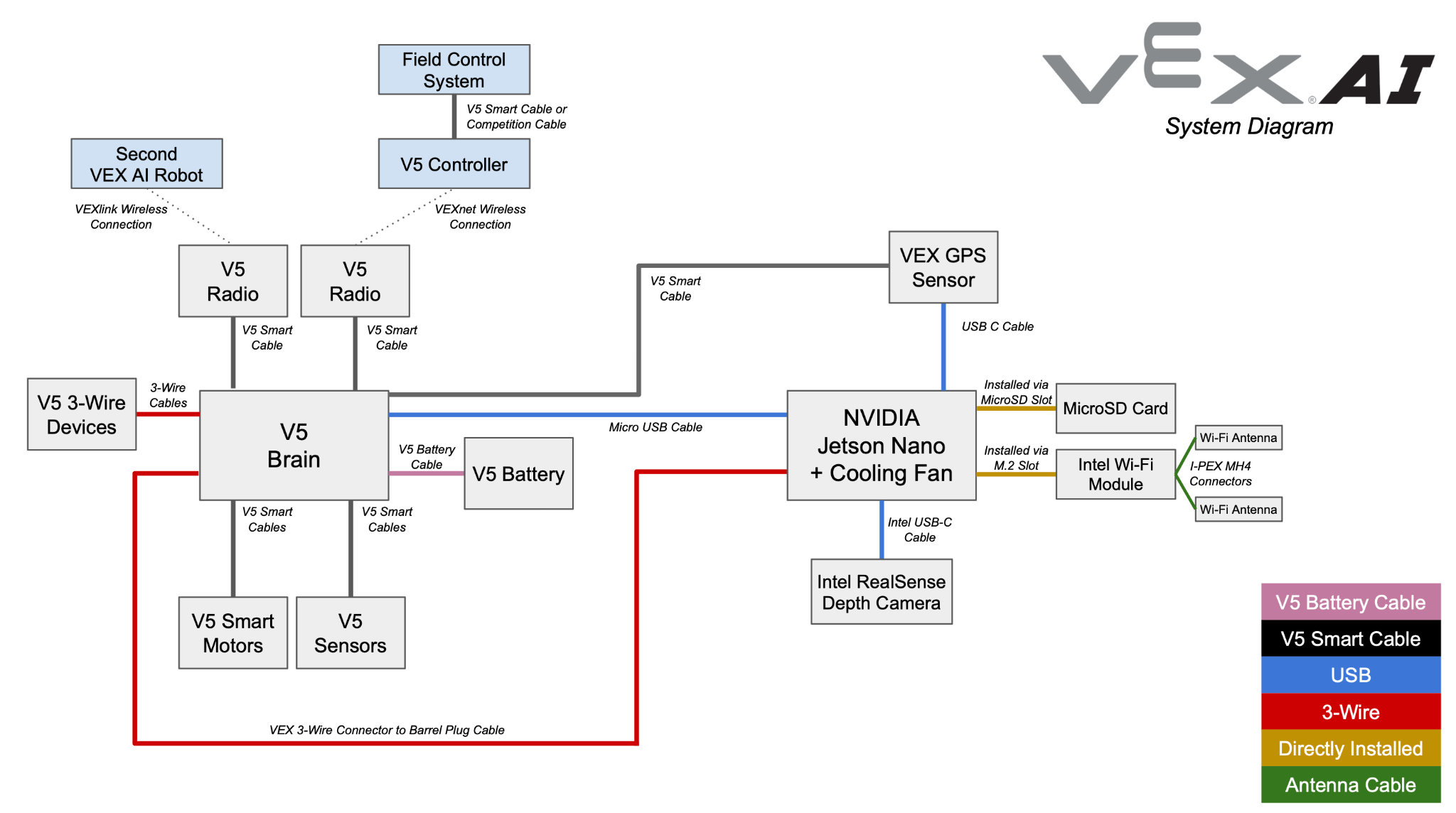

Figure 9. Original VEX AI System Diagram — the baseline architecture before proposed enhancements.

- SpotFi — a WiFi-based decimeter-level localization algorithm developed at Stanford that dramatically improves positional accuracy by computing Angle of Arrival (AoA) from WiFi channel state information, without requiring additional hardware beyond the robot's existing V5 Radio

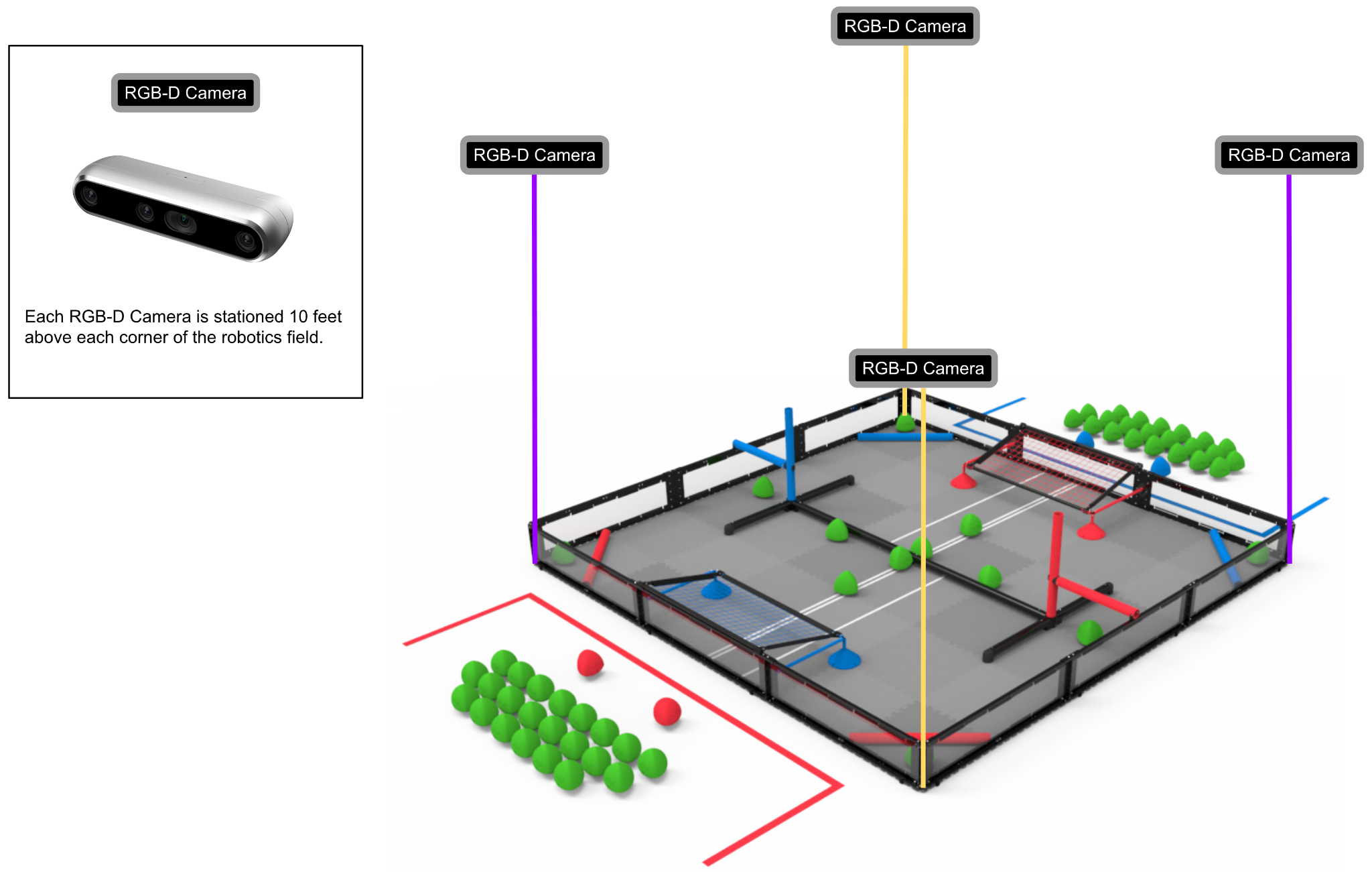

- RGB-D Mapping — four Intel RealSense Depth Camera D457 units mounted on 10-foot posts at each corner of the field, continuously capturing 3D depth images of the entire playing surface in real time

Both are integrated with the NVIDIA Jetson Nano processor already embedded in the VEX AI system, which is purpose-built for edge AI inference.

↓ Real-time 3D depth data (up to 6 m / 19.7 ft range)

NVIDIA Jetson Nano

↓ Runs SpotFi + RGB-D Mapping algorithms

Enhanced Sensor Fusion Map

↓ 3D field model with X, Y, Z coordinates

AI Vision System + GPS Sensor

↑ Combined: full spatial awareness + elevation detection

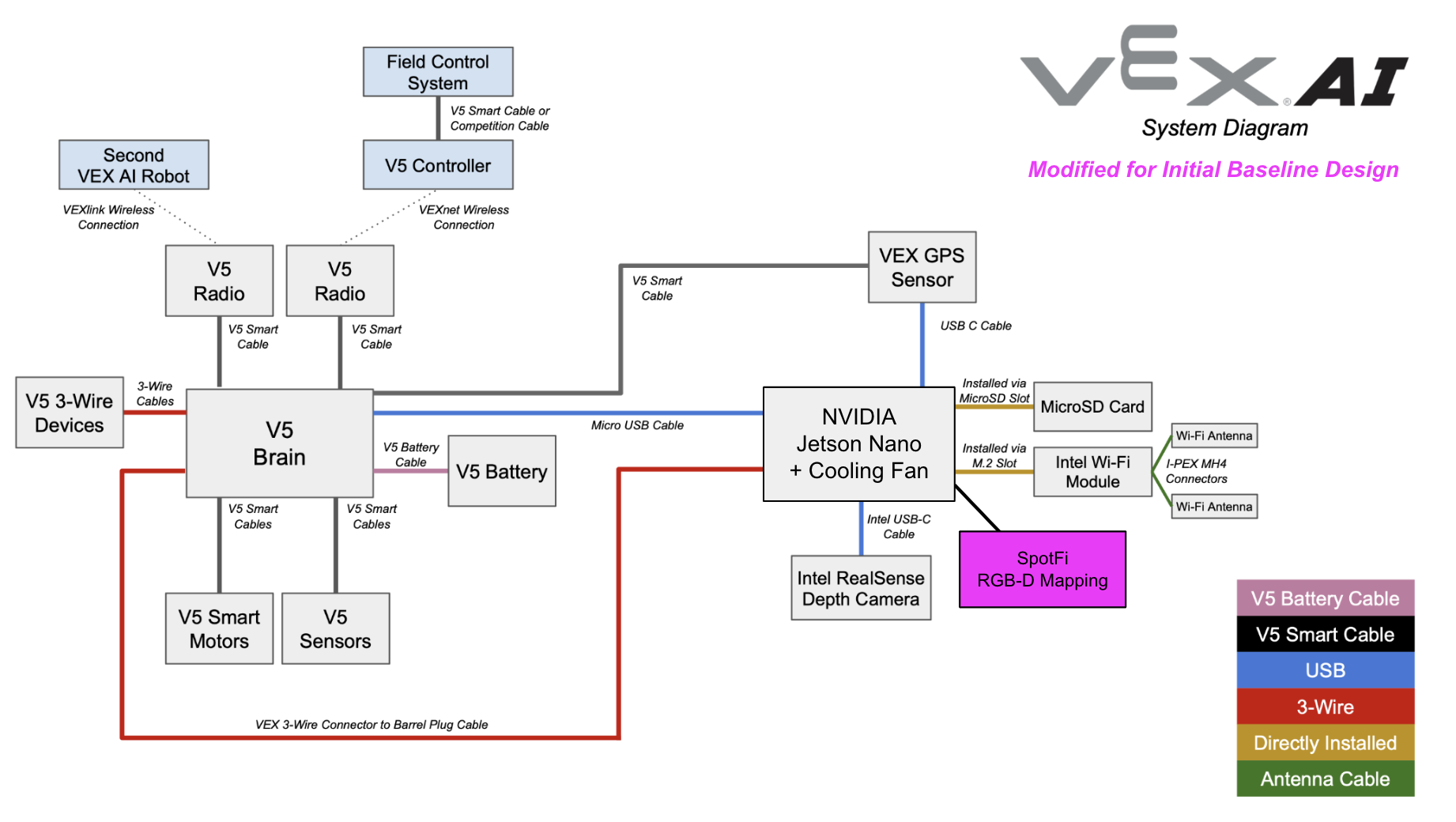

Figure 10. VEX AI System — Modified Architecture for Initial Baseline Design, integrating SpotFi and RGB-D Mapping.

| Component | Role | Addresses |

|---|---|---|

| Intel RealSense D457 (×4) | 3D depth capture of entire field | Stale object positions, Z-axis blindness |

| SpotFi Algorithm | Decimeter-level WiFi localization | GPS positional accuracy |

| NVIDIA Jetson Nano | Edge AI processing | Real-time computation of 3D map |

Figure 11. RGB-D stations above all four field corners — full 3D coverage.

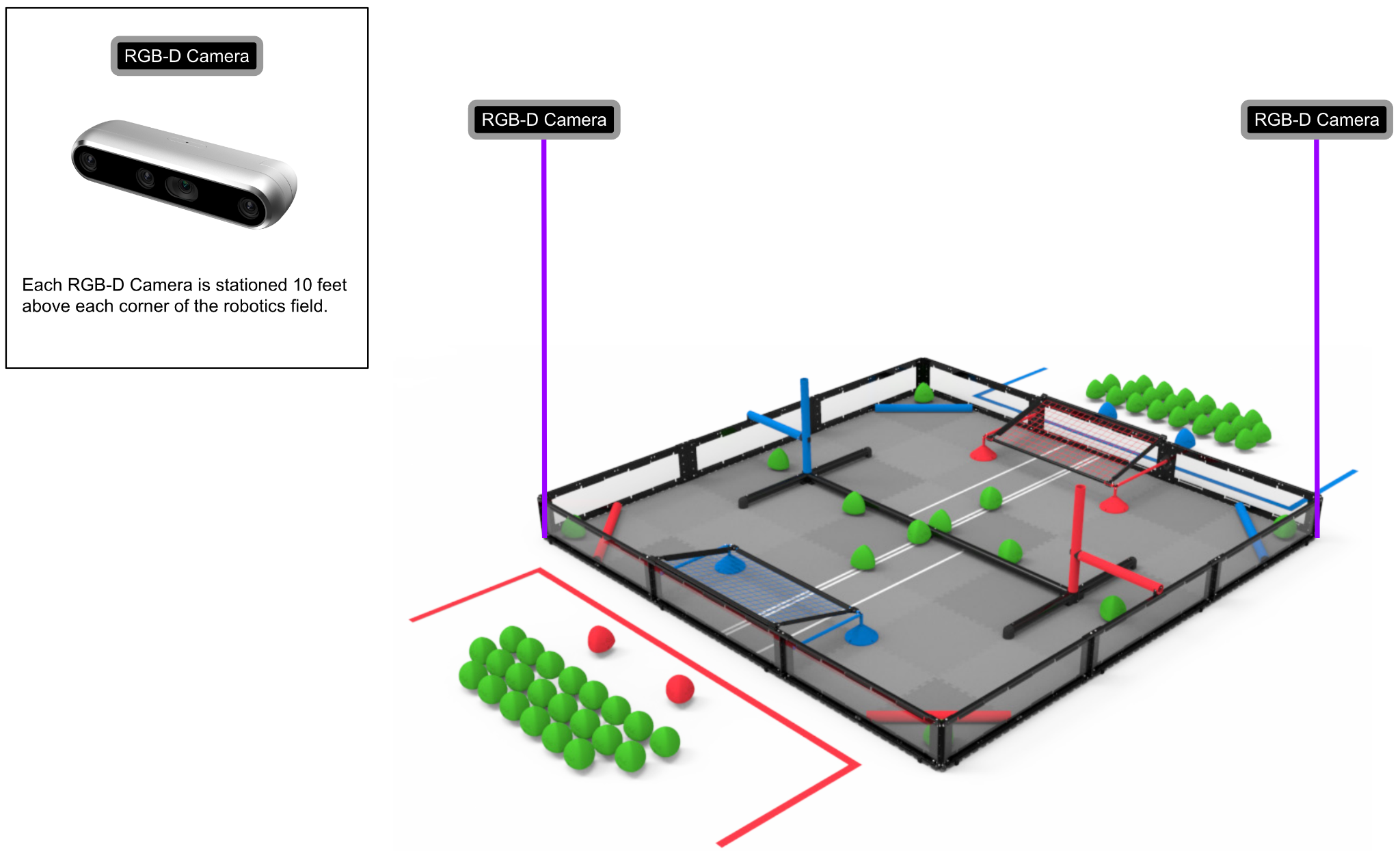

Figure 12. Cost-reduction alternative: 2 cameras at opposite corners (~$998 vs. ~$1,996).

A cost-reduction alternative: using 2 RGB-D cameras at opposite corners rather than 4 reduces hardware cost from ~$1,996 to ~$998, at the expense of some 3D rendering coverage. Whether the cost savings outweigh the coverage reduction requires empirical evaluation.

Design Refinement: Peer-to-Peer Decentralized Coordination

The baseline design improves individual robot perception. The design refinement goes further: it makes all four robots on the field share what they see with each other, creating a distributed sensor network with no single point of failure.

Figure 13. VEX AI System — Modified Architecture for Design Refinement, adding P2P decentralized coordination across all four robots.

Using the V5 Radio already on each robot, a Peer-to-Peer (P2P) system with decentralized coordination would allow each robot to broadcast its GPS position, Vision System observations, and Sensor Fusion Map data to all peers in real time. Each robot receives and integrates the field awareness of all four robots simultaneously.

- Distributed computing: Data collection and processing load is spread across all four robots — faster throughput, no central bottleneck

- Improved resilience: If one robot's sensors degrade, the other three continue to provide field awareness. No single failure cascades to the whole system

- Full-field visibility: Combined with RGB-D cameras, the P2P network gives every robot access to 360° field awareness — not just its own forward-facing view

- Better temporal decisions: Shared observations allow each AI to reason about where all objects are right now, not just where they were when last individually seen

Implementation requires downloading a P2P software application into VEXos (the V5 Brain's operating system). The application creates overlay networks, handles peer routing, distributes data, and achieves load balancing across all connected robots.

How to Evaluate It

To rigorously compare the baseline design against the design refinement, controlled experiments would be run across at least 50 identical 2-minute matches — same starting positions, same Triball placements, same field conditions. Three metrics would be tracked:

Metric 1

Gameplay Scoring Performance

Total points scored per match for each approach. Statistical trends would reveal whether the P2P refinement produces consistently higher scores across many runs — controlling for the inherent variability of dynamic gameplay.

Metric 2

CPU Runtime

Milliseconds required by the V5 Brain and Jetson Nano to execute specific tasks: navigating to a scoring goal, processing incoming sensor data, communicating with a peer robot via V5 Radio. Lower runtime indicates more efficient spatial reasoning.

Metric 3

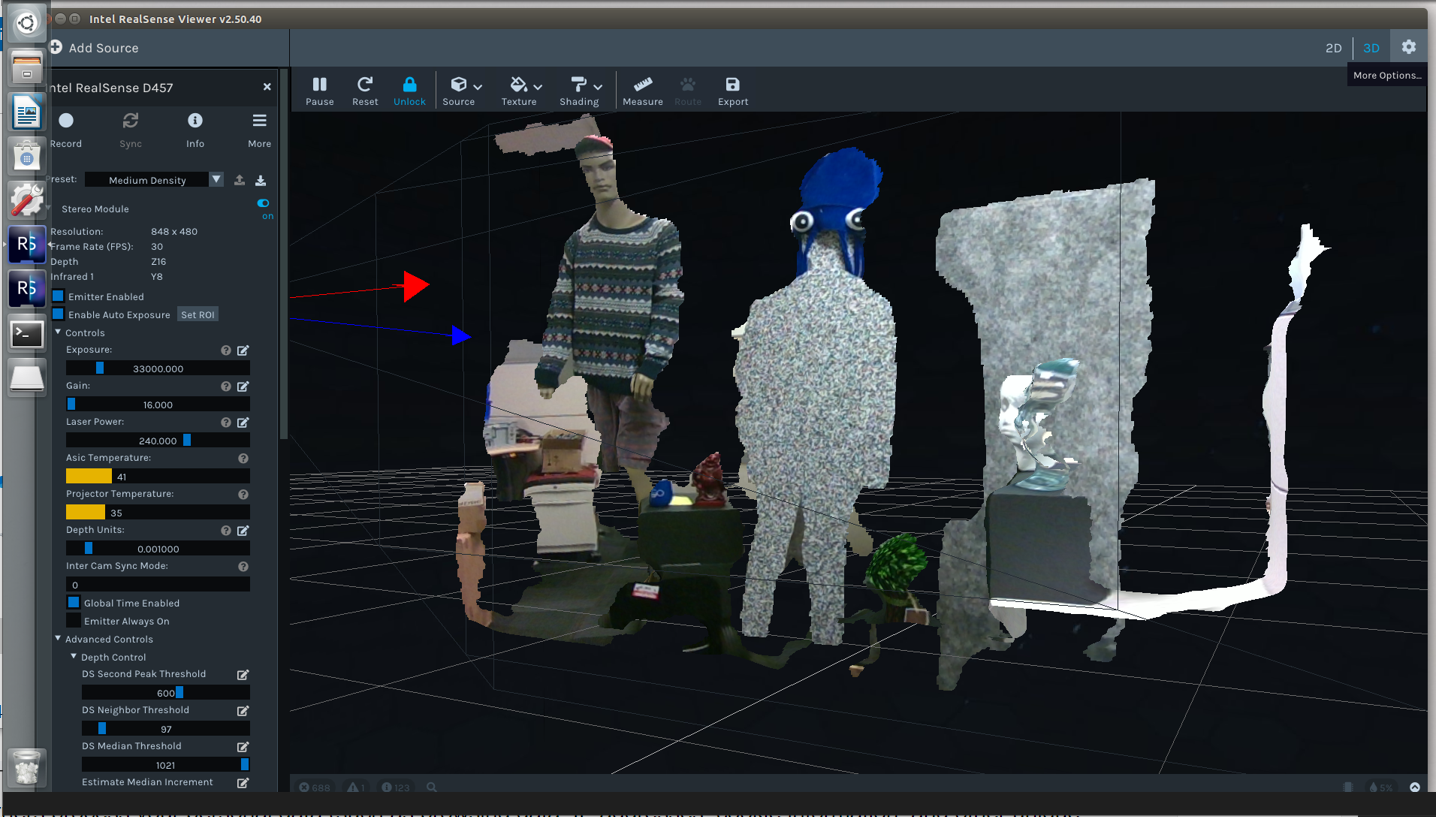

3D Map Quality

Qualitative comparison of 3D renderings from the Intel RealSense D457 viewer — evaluating whether the P2P-enhanced system produces faster and more complete 3D field reconstructions than the baseline four-camera-only approach.

Figure 14. Intel RealSense D457 3D mapping rendering — used to qualitatively evaluate map quality across both designs.

| Metric | Data Type | Storage |

|---|---|---|

| Match scores | Integer (points) | CSV |

| CPU runtime | Float (milliseconds) | CSV |

| Object positions | Float (X, Y, Z coordinates) | CSV + RAM (Jetson Nano) |

| 3D spatial images | Depth image frames | Intel RealSense platform |

| Source code | VEXos application | V5 Brain + PC backup |

System Resilience & Failure Modes

Any robust system design must account for component failure. For this architecture:

- 1–2 RGB-D cameras fail: Remaining cameras still provide adequate 3D coverage; match continues

- 3+ RGB-D cameras fail: 3D mapping is severely degraded; the system falls back to the original GPS + Sensor Fusion Map baseline

- One robot's VEX AI System degrades: Under P2P, the three remaining robots continue sharing observations — partial degradation, not total failure. Without P2P, a single robot failure isolates completely

- V5 Radio or WiFi antenna failure: P2P communication is severed for that robot; it operates on its own sensors only, reverting to baseline behavior

Security: no personal information is stored on the V5 Brain, Jetson Nano, or accompanying software. Compliance: all robots must conform to the official Over Under Game Manual rules at all times. Maintenance: all associated firmware and software must be kept up to date before each match.

Key Takeaways

- The VEX AI system's core limitation is perceptual, not computational. The existing hardware is capable — it just cannot see the full field simultaneously or in 3D

- RGB-D mapping solves the field-of-view problem by providing an always-on, overhead 3D view of the entire playing surface, independent of each robot's orientation

- SpotFi improves localization accuracy at decimeter-level resolution using existing WiFi hardware — a high-value improvement with minimal added cost

- P2P coordination is the multiplier. Individual robots with better sensors are good; four robots sharing a unified, real-time field model is fundamentally more capable

- Graceful degradation matters in robotics. The layered fallback architecture ensures the system continues operating meaningfully even when individual components fail

What I Learned & Why It Matters to Employers

This project required thinking like a systems engineer: starting with a concrete failure mode, tracing it to a root cause (perceptual limitation), proposing a minimum-viable fix (SpotFi + RGB-D), and then designing a superior refinement (P2P coordination) with measurable evaluation criteria. The skills demonstrated here — need finding, system architecture, iterative design refinement, failure mode analysis — apply directly to any engineering role building complex, multi-component systems in the real world.

Conclusion & Reflections

The VEX AI Robotics Competition is an ambitious platform that pushes autonomous robotics into a competitive sports format. Its current limitations are not fundamental — they are engineering problems with engineering solutions. Adding 3D depth perception from four field-corner cameras, improving localization accuracy with SpotFi, and enabling P2P data sharing between robots creates a system where each robot's AI operates with full field awareness rather than a narrow forward-facing view.

The design refinement — P2P decentralized coordination — is particularly compelling because it transforms four independently operating robots into a collaborative network. The whole becomes greater than the sum of its parts: every robot benefits from every other robot's observations, and the system degrades gracefully rather than catastrophically when individual components fail.

| Design Component | Problem Solved |

|---|---|

| SpotFi localization | Improved X/Y positional accuracy ✓ |

| RGB-D 3D Mapping (×4 cameras) | Full-field 3D visibility, Z-axis awareness ✓ |

| P2P Decentralized Coordination | Distributed field awareness, load balancing ✓ |

| Graceful degradation fallbacks | Resilient to partial component failure ✓ |

| Evaluation framework defined | Scoring, CPU runtime, 3D map quality ✓ |

Read the Full Paper

Submitted for CS6675 at Georgia Tech (Spring 2024).